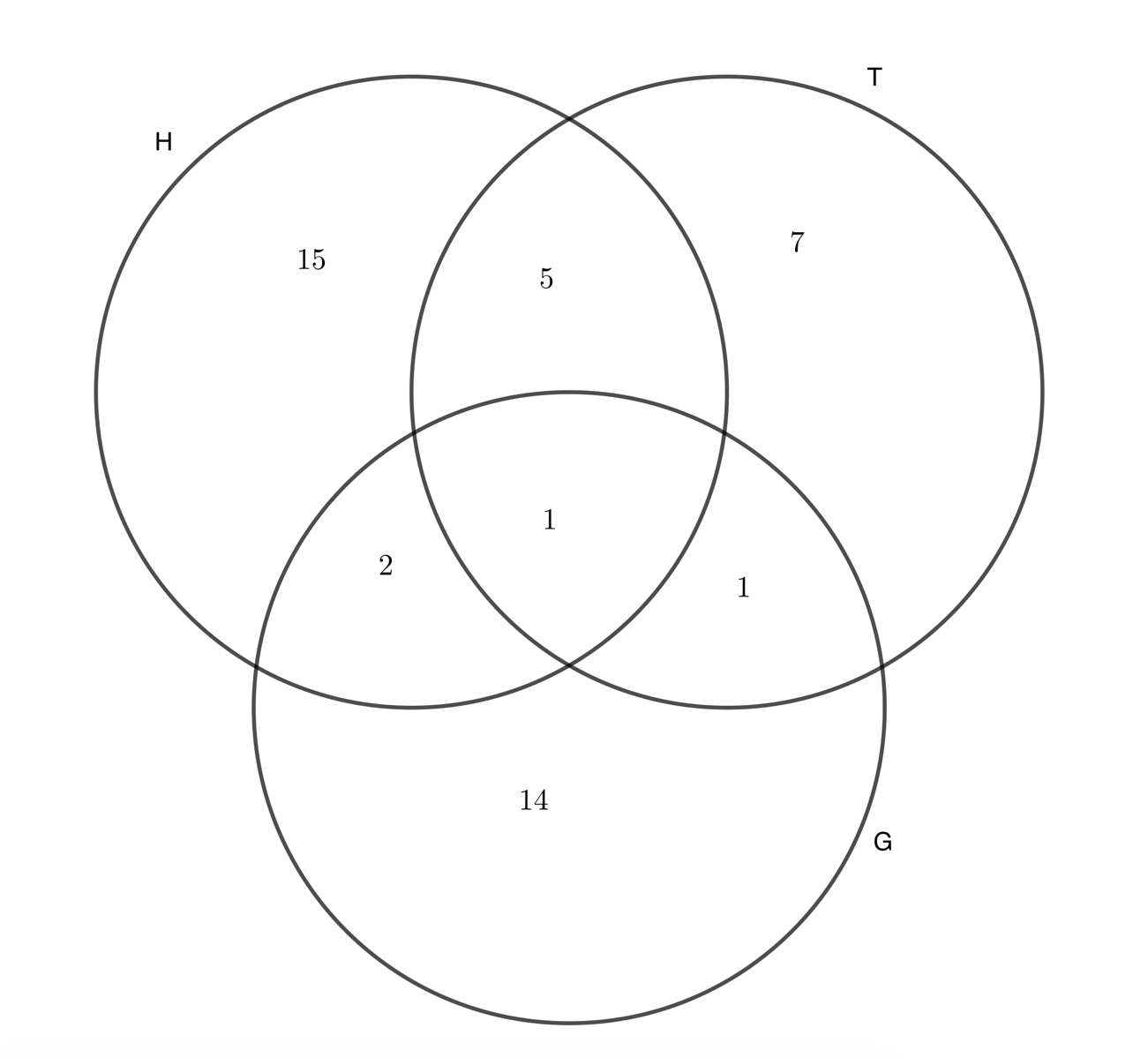

A group of friends play a range of sports.

23 play hockey, 14 play tennis, and 18 play golf. 6 play hockey and tennis, 2 play tennis and golf, and 3 play golf and hockey. 1 person plays all three.

How many in the group play at least one of the three sports?

This is easy: just add up all the numbers in the diagram to get 45.

Try the calculation again, but this time, do so simply by replacing the dots with either \(+\) or \(-\) in:

\(23\dots14\dots18\dots6\dots3\dots2\dots1\)

\(23+14+18-6-3-2+1=45\)

We can write this result in set-theory notation:

\(\begin{align*}c(H∪T∪G)=c(H)&+c(T)+c(G)\\

&-c(H∩T)-c(T∩G)-c(G∩H)\\

&+c(H∩T∩G)\end{align*}\)

where I’ve used the letter for “count the number elements”.

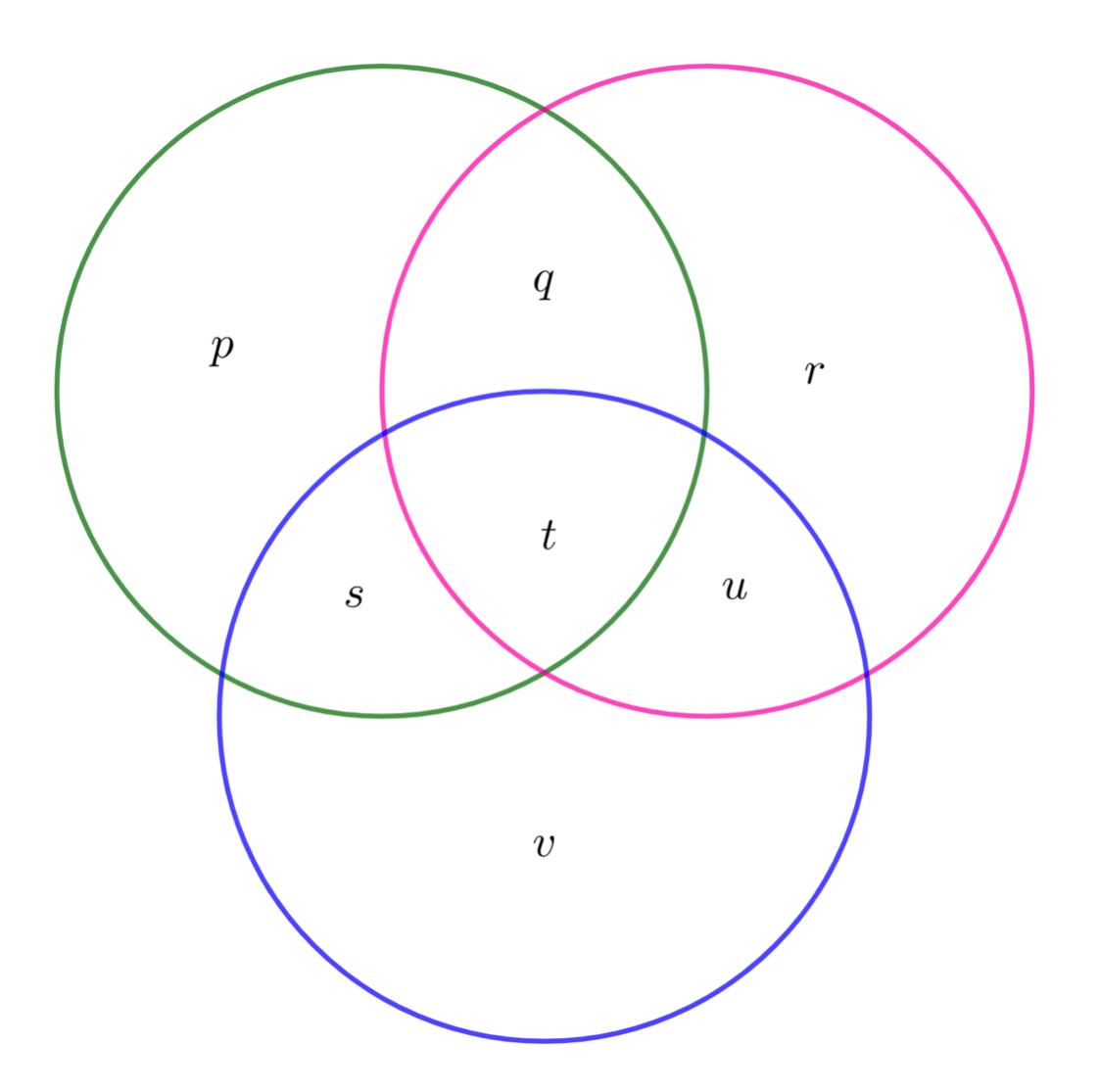

Explain this result using this Venn diagram:

\(\begin{align*} c(H)+&c(T)+c(G)-c(H\cap T)-c(T\cap G)-c(G\cap H)+c(H\cap T\cap G)\\[4pt] =&(p+q+s+t)+(q+r+t+u)+(s+t+u+v)\\ &\quad -(q+t)-(u+t)-(s+t)+t\\[4pt] =&p+q+r+s+t+u+v\\[4pt] =&c(H\cup T\cup G) \end{align*}\)

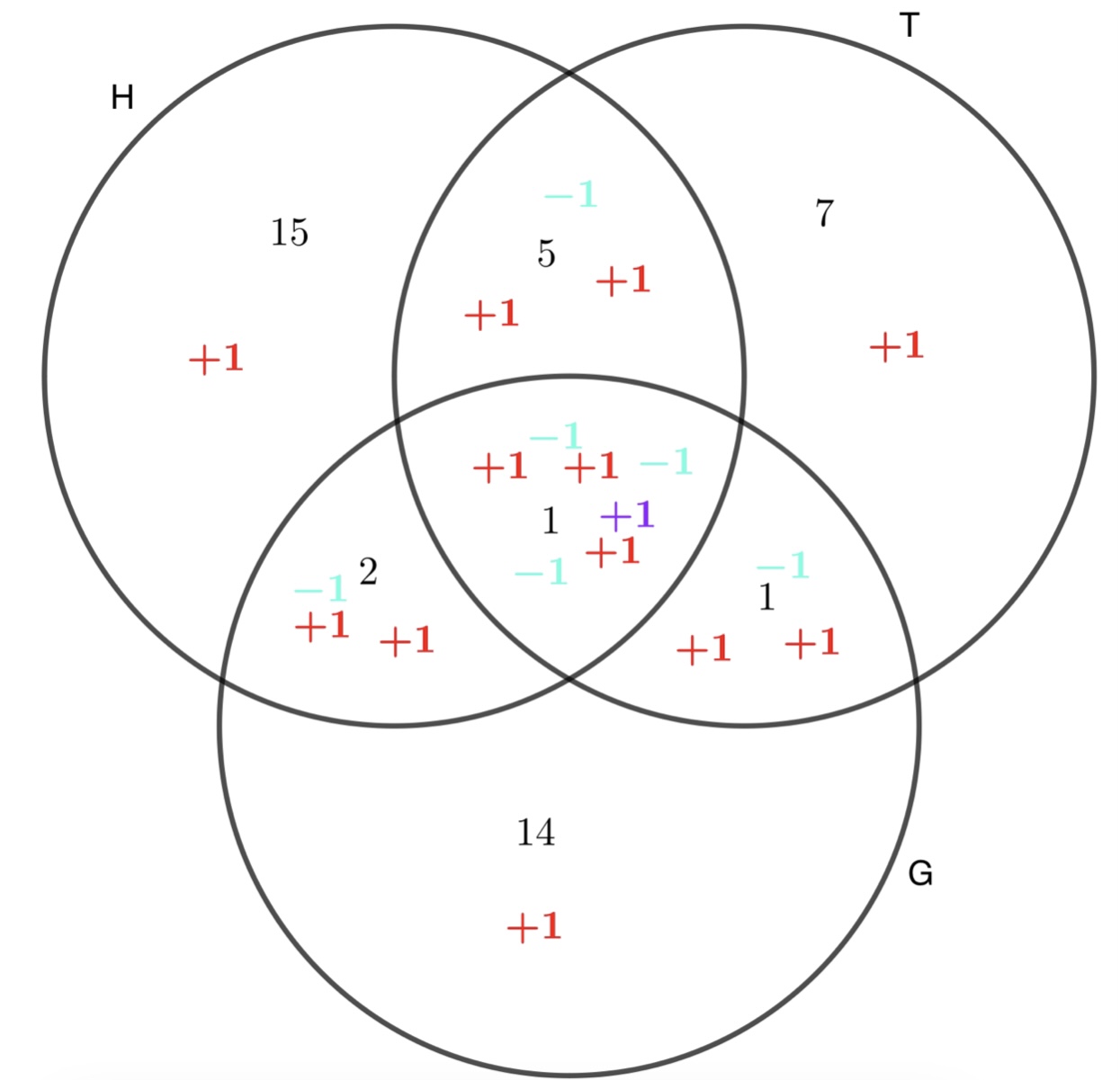

Now explain the result using this Venn diagram:

The red \(+1\)s indicate how many times each region has been counted when we have added \(24+14+18\). The blue \(-1\)s indicate how many times each region has been discounted when we have subtracted \(-6-3-2\). The purple \(+1\) indicates adding back the person who plays all three sports. The total of \(\pm1\)s in each region is \(1\), so everyone has been counted exactly once.

Extending this to four sports is quite tricky with Venn diagrams, but we can use a counting argument that essentially mimics the second of our three-sport Venn diagrams.

Use a counting argument to explain how to extend this formula to include rugby as well (or perhaps we could use any four sets \(A,B,C,D\)).

If we want to know how many elements there are in the union of four sets, we can add up the number in each set individually. This counts any elements that are only in one of the sets exactly once.

However, it counts those elements in exactly two sets twice: it counts those in \(A\cap B\) once for being in \(A\) and once for being in \(B\). So we must take away the number of elements in \(A\cap B\). Likewise for the other pairs of sets to get:

\(\begin{align*}c(A)&+c(B)+c(C)+c(D)\\

&-c(A\cap B)-c(A\cap C)-c(A\cap D)-c(B\cap C)-c(B\cap D)-c(C\cap D)\end{align*}\)

Now we have counted those in exactly one or exactly two sets the right number of times (once).

But this is still not good enough, because if an element is in \(A\cap B\cap C\), then we have added it three times in the first step and taken it away three times in the second step, so we must add it back in again. This means that we must add the number of elements in \(A\cap B\cap C\) back in to make sure it is counted, and the same for all the other collections of three sets. This gives

\(\begin{align*}

&c(A)+c(B)+c(C)+c(D)\\

-&c(A\cap B)-c(A\cap C)-c(A\cap D)-c(B\cap C)-c(B\cap D)-c(C\cap D)\\

+&c(A\cap B\cap C)+c(A\cap B\cap D)+c(A\cap C\cap D)+c(B\cap C\cap D)

\end{align*}\)

And lastly, an element in \(A\cap B\cap C\cap D\) has been added in \(\binom{4}{1}=4\) times, taken away \(\binom{4}{2}=6\) times, and added in \(\binom{4}{3}=4\) times. Overall it has been added in twice, but we only want it once, so we must take it away again. This means that we must subtract the number of elements in \(A\cap B\cap C\cap D\), so we end up with

\(\begin{align*}

c(A\cup B\cup C\cup D)=&c(A)+c(B)+c(C)+c(D)\\

&-c(A\cap B)-c(A\cap C)-c(A\cap D)\\

&-c(B\cap C)-c(B\cap D)-c(C\cap D)\\

&+c(A\cap B\cap C)+c(A\cap B\cap D)\\

&+c(A\cap C\cap D)+c(B\cap C\cap D)\\

&-c(A\cap B\cap C\cap D)

\end{align*}\)

Turn this into a theorem about probabilities.

If we pick an element of our universe of sets at random, we can see straight away that

\(\begin{align*}

P(A\cup B\cup C\cup D)=&P(A)+P(B)+P(C)+P(D)\\

&-P(A\cap B)-P(A\cap C)-P(A\cap D)\\

&-P(B\cap C)-P(B\cap D)-P(C\cap D)\\

&+P(A\cap B\cap C)+P(A\cap B\cap D)\\

&+P(A\cap C\cap D)+P(B\cap C\cap D)\\

&-P(A\cap B\cap C\cap D)

\end{align*}\)

by simply dividing everything in the previous formula by the total number of elements in our universe.

You can probably see intuitively how to extend his theorem to \(n\) sets, but you might like to try to come up with a convincing argument. Or maybe not. At any rate, here’s my attempt (though don’t feel you need to spend all that much time on it):

To count the number of elements in \(n\) sets, add the sizes of the individual sets. Now the elements that are only in one set have been counted the right number of times. Then we must subtract the number of elements in each of the pairs \(A\cap B\), of which there are \(\binom{n}{2}\).

At this point, the elements that are in exactly two sets have been counted exactly once, but those in exactly three sets have been added in 3 times in the first step, but subtracted \(\binom{3}{2}=3\) times in the second step, which means they have not been counted at all. So we’ll add in the number of elements in each of the trios \(A\cap B\cap C\), of which there are \(\binom{n}{3}\).

Now all elements in at most 3 sets have been counted once. Those in exactly 4 sets have been added \(\binom{4}{1}=4\) times, subtracted \(\binom{4}{2}=6\) times, and added \(\binom{4}{3}=4\)times, which, on balance, means they have been counted twice. So we’ll subtract the number of elements in each of the quartets \(A\cap B\cap C\cap D\), of which there are \(\binom{n}{4}\).

Now all elements in at most 4 sets have been counted once. Those in exactly 5 sets have been added \(\binom{5}{1}=5\) times, subtracted \(\binom{5}{2}=10\) times, and added \(\binom{5}{3}=10\) times, and subtracted \(\binom{5}{4}=5\) times, which, on balance, means they have been counted zero times.

And so on.

What are the alternating sums of the rows of Pascal's Triangle? Alternating means making the terms alternately \(+\) and \(-\). Why is this? Think about how this relates to our work so far on PIE.

Expand \((1+x)^n\) when \(x=-1\).

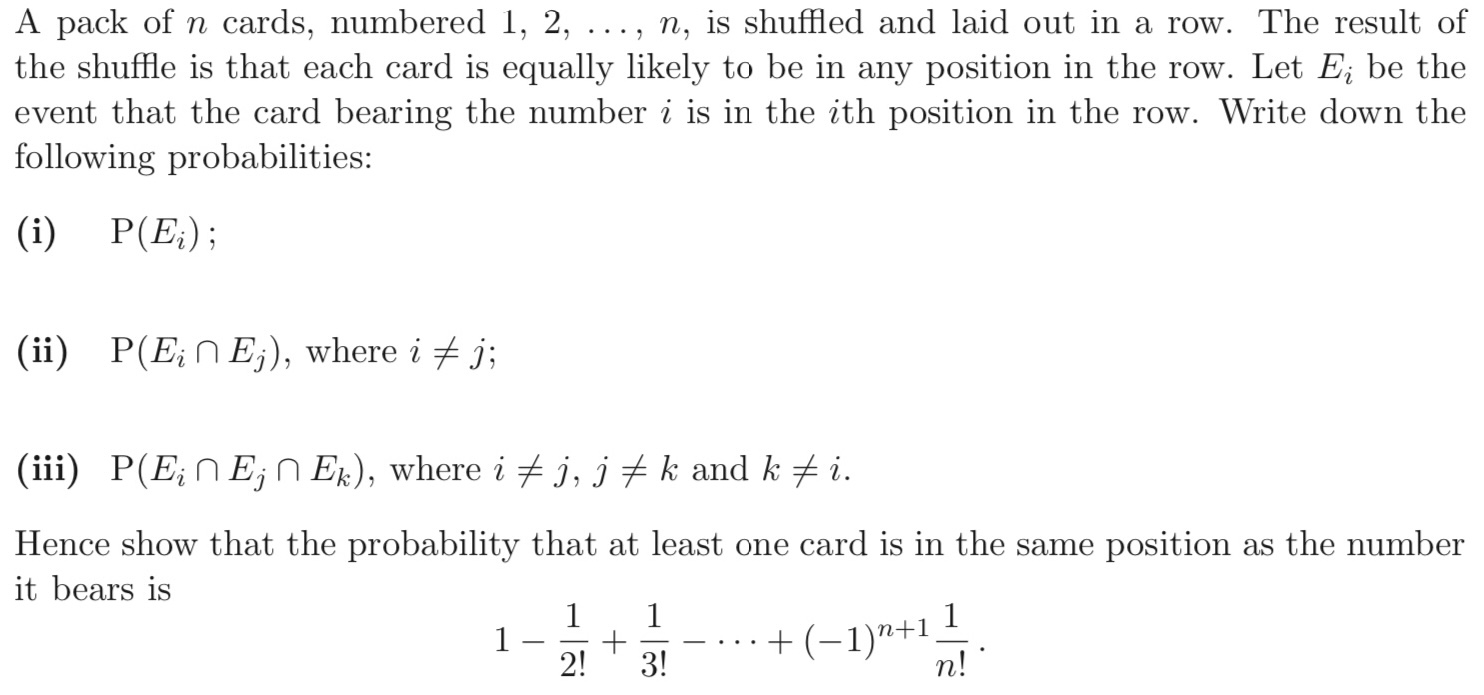

Here is a STEP PIE question:

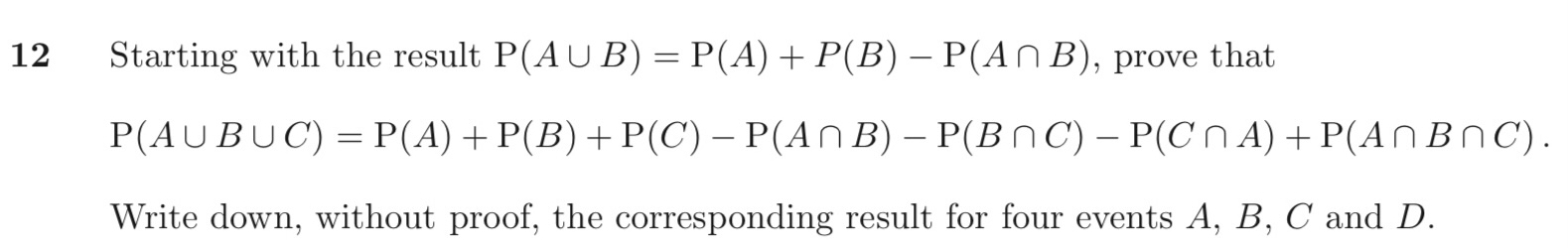

Actually, the question wants you to use a different approach to those you have just been thinking about. It wants you essentially to take the first steps on a path to proving the principle by induction. This may be a more rigorous proof, but it doesn’t help us understand what’s going on in the way that we do now from thinking about Venn diagrams and counting arguments. Nonetheless, we had better do the question as set!

So, what does the question want us to do?

It wants us to assume that \(P(A\cup B)=P(A)+P(B)-P(A\cap B)\), which would be the base case in an inductive proof, and from this alone, prove the parallel theorem for three sets.

Although it doesn’t ask us to prove the two-set case, we can easily do so:

Prove that \(P(A\cup B)=P(A)+P(B)-P(A\cap B)\)

This is just a simpler case of the various arguments we dealt with at the start. I’ll leave this to you.

Next, we need to use this to derive the three-set case.

By writing \(B\cup C\) in place of \(B\), extend the theorem about \(P(A\cup B)\) to a theorem about \(P(A\cup B\cup C)\).

\(\begin{align*} c(A\cup B\cup C)&=c(A\cup (B\cup C))\\[4pt] &=c(A)+c(B\cup C)-c(A\cap ( B\cup C))\\ \end{align*}\)

At this point we are a bit stuck. We need another result:

Explain why \({A\cap (B\cup C)=(A\cap B)\cup (A\cap C)}\).

The easiest way to see this is on a Venn diagram, which again I will leave to you.

Incidentally, another way to say

\(A\cap (B\cup C)=(A\cap B)\cup (A\cap C)\)

is “intersection is distributive over union”, just as “multiplication is distributive over addition” means that

\(a\times(b+c)=a\times b+a\times c\).

Notice, though, that union is distributive over intersection, whereas addition is not distributive over multiplication.

Back to the question: with this result, we can now tackle:

By writing \(B\cup C\) in place of \(B\), extend the theorem about \(P(A\cup B)\) to a theorem about \(P(A\cup B\cup C)\).

\(\begin{align*} c(A\cup B\cup C)&=c(A\cup (B\cup C))\\[4pt] &=c(A)+c(B\cup C)-c(A\cap ( B\cup C))\\[4pt] &=c(A)+c(B)+c(C)\\ &\quad-c(B\cap C)-c((A\cap B)\cup (A\cap C))\\[4pt] &=c(A)+c(B)+c(C)-c(B\cap C)\\ &\quad-\big[c(A\cap B)+c(A\cap C)-c(A\cap B\cap C)\big]\\[4pt] &=c(A)+c(B)+c(C)-c(B\cap C)-c(A\cap B)-c(A\cap C)\\ &\quad+c(A\cap B\cap C)\\ \end{align*}\)

At this point, the question asks us to extend the theorem to four sets simply be pattern-spotting, but of course we already looked at this in more detail earlier.

The next part of the question is actually going to force us to extend the theorem to any number of sets, which, in fact, we have also examined pretty thoroughly:

What are \(P\left(E_1\right),\;P\left(E_2\right),\;P\left(E_3\right),\dots\)?

The probability that card 1 is in its correct place is \(\frac{1}{n}\). This is also the probability that card 2 is in its correct place, and so on. So

\(P\left(E_i\right)=\frac{1}{n}\)

Find \(P\left(E_1\cap E_2\right)\), the probability that card 1 is in position 1 and card 2 is in position 2 after the shuffle. Then find \(P\left(E_i\cap E_j\right)\) for any distinct \(i,\,j\).

Again, the probability that card 1 is in position 1 is \(\frac{1}{n}\). Once card 1 has taken its position, card 2 must go in position 2, but there are now only \(n-1\) possible places, so the probability that both cards are in their original positions is \(\frac{1}{n}\times\frac{1}{n-1}\).

All the cards are the same in this respect, so

\(P\left(E_i\cap E_j\right)=\frac{1}{n}\times\frac{1}{n-1}\).

when \(i\ne j\).

Find \(P\left(E_i\cap E_j)\cap E_k\right)\) for any distinct \(i, \,j,\,k\)

This is easy now:

\(P\left(E_i\cap E_j \cap E_k\right)=\frac{1}{n}\times\frac{1}{n-1}\times \frac{1}{n-2}\)

when \(i, \,j,\,k\) are distinct.

Take \(n=4\). What does our formula for the size (or probability) of the union of the four sets look like now?

\(\begin{align*} P\left(E_1\cup E_2\cup E_3\cup E_4\right)=&\binom{4}{1}P\left(E_1\right)-\binom{4}{2}P\left(E_1\cap E_2\right)\\ &+\binom{4}{3}P\left(E_1\cap E_2\cap E_3\right)-P\left(E_1\cap E_2\cap E_3\cap E_4\right) \end{align*}\)

Where do the binomial coefficients come from here?

We have to subtract the sum of all the probabilities of pairs of cards being in the right place. But these are all the same probabilities, and there are \(\binom{4}{2}\)pairs. The same for the other binomials.

\(P\left(E_1\cup E_2\cup E_3\cup E_4\right)\) is just the probability that at least one card ends up in its starting position.

Extend this to any \(n\).

\(\small \begin{align*}

P(&\text{at least one ends up in the right place})\\[4pt]

&=P\left(E_1\cup E_2\cup E_3\cup\dots\cup E_n\right)\\[4pt]

&=\binom{n}{1}\frac{1}{n}-\binom{n}{2}\frac{1}{n(n-1)}+\binom{n}{3}\frac{1}{n(n-1)(n-2)}-\dots -(-1)^n\binom{n}{n}\frac{1}{n!}\\[4pt]

&=\frac{n!}{1!(n-1)!n}-\frac{n!}{2!(n-2)!n(n-1)}+\frac{n!}{3!(n-3)!n(n-1)(n-2)}-\dots-(-1)^n\frac{1}{n!}\\[4pt]

&=\frac{1}{1!}-\frac{1}{2!}+\frac{1}{3!}-\dots-(-1)^n\frac{1}{n!}

\end{align*}\)

which is equivalent to the formula the question asks us to derive.

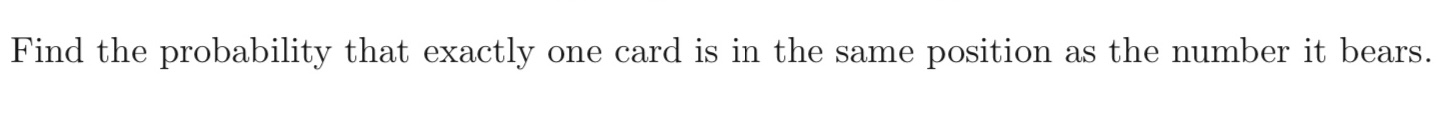

Finally, the question asks us to

Have a go at this.

Firstly, notice that

\(\begin{align*}P&(\text{no cards end up in the right position})\\[4pt]

&=1-P(\text{at least one card ends up in its right position})\\[4pt]

&=1-\left(\frac{1}{1!}-\frac{1}{2!}+\frac{1}{3!}-\dots-(-1)^n\frac{1}{n!}\right)\\[4pt]

&=\frac{1}{0!}-\frac{1}{1!}+\frac{1}{2!}-\frac{1}{3!}+\dots+(-1)^n\frac{1}{n!}\end{align*}\)

Let's call this probability \(P_n\).

If exactly one card is in the right position, then we can just ignore this card completely and consider only the remaining pack of \(n-1\) cards. Once we have shuffled this smaller pack, we can then reinsert the removed card in its original position. In other words, the probability we are looking for is just the probability that, with a pack of \(n-1\) cards, no card ends up in its original position, which is

\(\begin{align*}

\frac{1}{0!}-\frac{1}{1!}+\frac{1}{2!}-\frac{1}{3!}+\dots +(-1)^{n-1}\frac{1}{(n-1)!}

\end{align*}\)

What values does this formula assign to \(P_0\) and \(P_1\)? Explain how this relates to the problem.

The formula gives \(P_1=0\), which simply says that with 1 card, the card cannot end up in the wrong position. Then the formula gives \(P_0=1\). Why? When \(n=0\), no card is out of place in any shuffle, so we could reasonably say that \(P_0=1\).

Work out the probability that no card is in the right place for \(n=2,3,4,5,6,7,8,9\)

We now know that this probability is

\(\begin{align*}

P_n=\frac{1}{2!}-\frac{1}{3!}+\frac{1}{4!}+\dots+(-1)^n\frac{1}{n!}

\end{align*}\) when \(n\ge 2\), so

\(\begin{align*}&P_2=0.5,\;P_3=0.3333,\;P_4=0.375,\;P_5=0.3667,\;\\

&P_6=0.3681,\;P_7=0.3679,\;P_8=0.3679,\;P_9=0.3679\,\dots\end{align*}\)

Clearly the probabilities converge pretty rapidly to a value around \(37\%\). Let’s see why:

Perhaps you have come across this infinite sum before:

\(\begin{align*}

e=\frac{1}{0!}+\frac{1}{1!}+\frac{1}{2!}+\frac{1}{3!}+\frac{1}{4!}+\dots

\end{align*}\)

Or you may know that a power series for \(e^x\) is

\(\begin{align*}

e^x=\frac{1}{0!}+\frac{x}{1!}+\frac{x^2}{2!}+\frac{x^3}{3!}+\frac{x^4}{4!}+\dots

\end{align*}\)

Use this formula to find \(e^{-1}\)

\(\displaystyle e^{-1}=1-1+\frac{1}{2!}-\frac{1}{3!}+\frac{1}{4!}-\dots=\lim_{n\to\infty}P_n=\frac{1}{e}=0.37....\)

and it only takes about 6 or 7 cards before the probability of no card being in its original place, is stable, and very close to \(37\%\).

Yes! The question leads us to consider card shuffling through the lens of the Principle of Inclusion and Exclusion. This is far from the only way to reach the result you are asked to prove. It’s not even clear to me that, instructive though it is, it is the easiest way. Fortunately, I have written about some other ways to approach the same problem in the article “Derangements” that you will find on my own website. So head over to mathsinsight.co.uk for a whole new perspective on the same card shuffling problem.